The AI Trap: When Technical Leverage Outpaces Structural Control

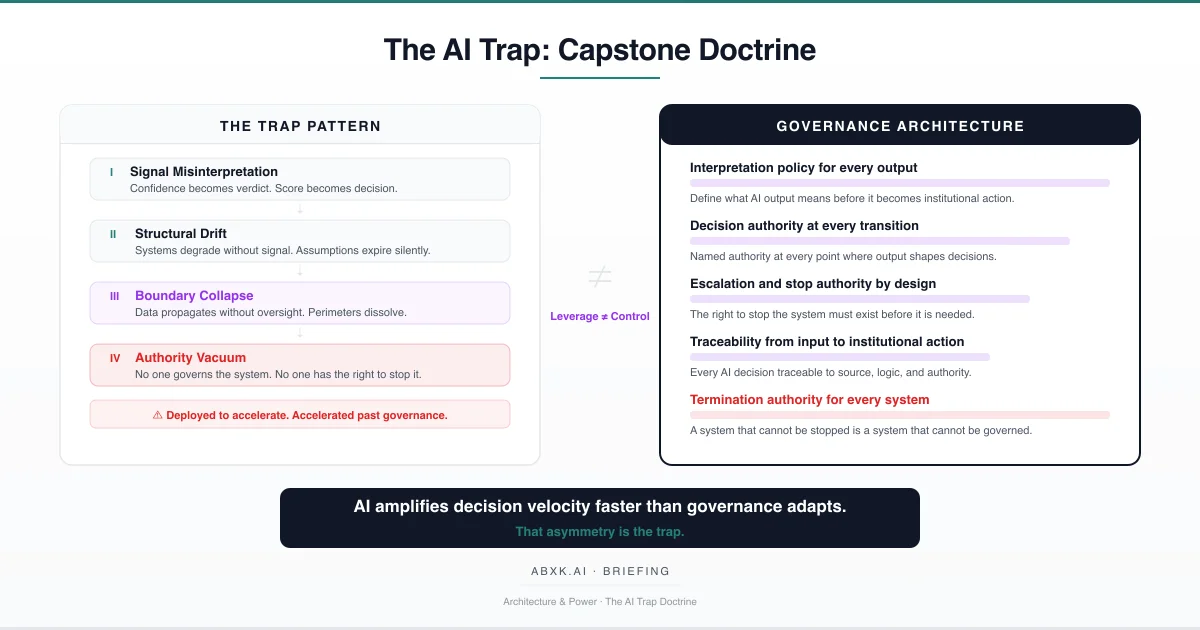

AI systems amplify decision velocity faster than governance adapts. Organizations deploy capabilities that exceed their structural capacity to govern — creating …

Focused analyses of AI system architecture, decision boundaries, and operational risk in production environments.

AI systems amplify decision velocity faster than governance adapts. Organizations deploy capabilities that exceed their structural capacity to govern — creating …

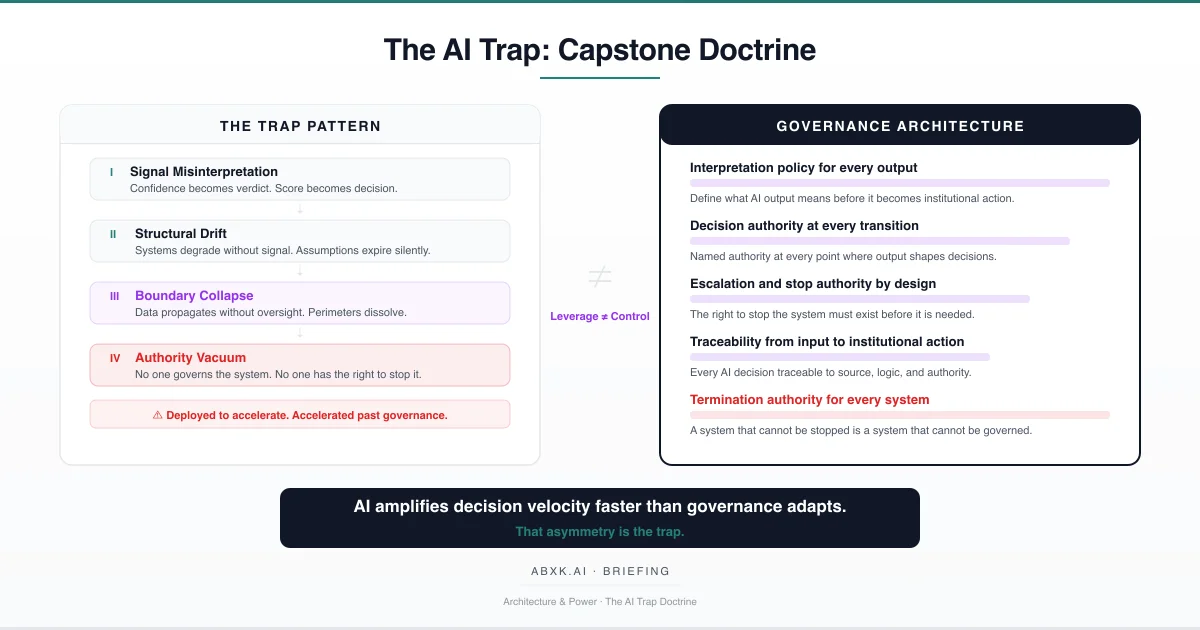

Multi-agent AI systems distribute decision-making across autonomous components that coordinate, delegate, and act without centralized authority. When …

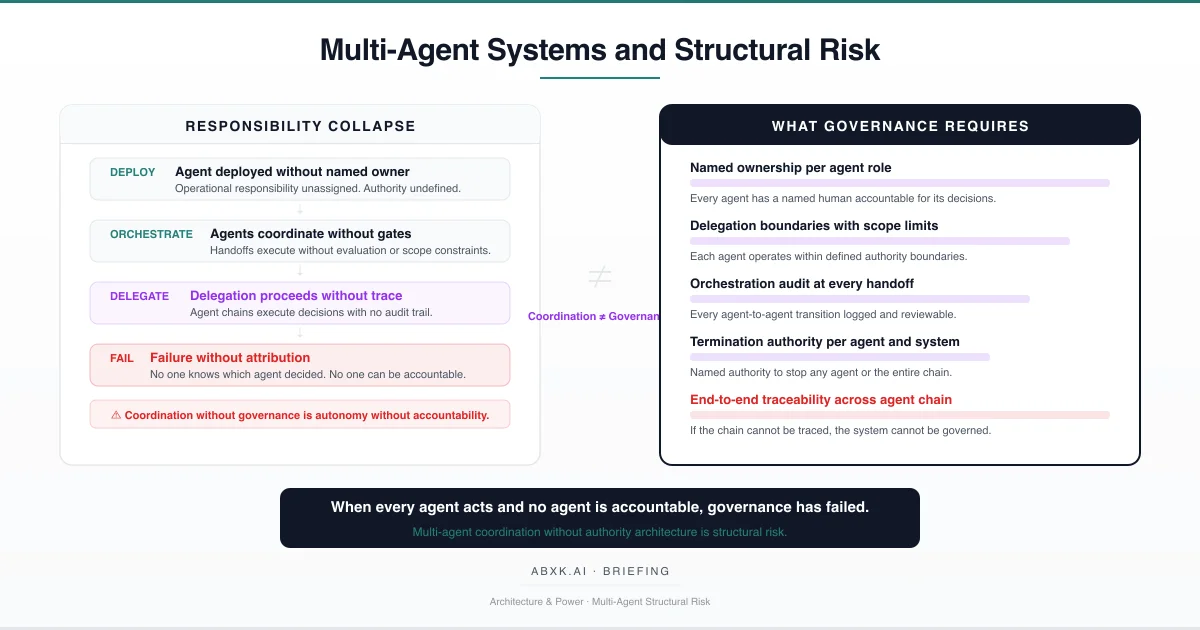

Organizations designate human reviewers as control mechanisms for AI systems — placing people in approval workflows, review queues, and oversight roles. But …

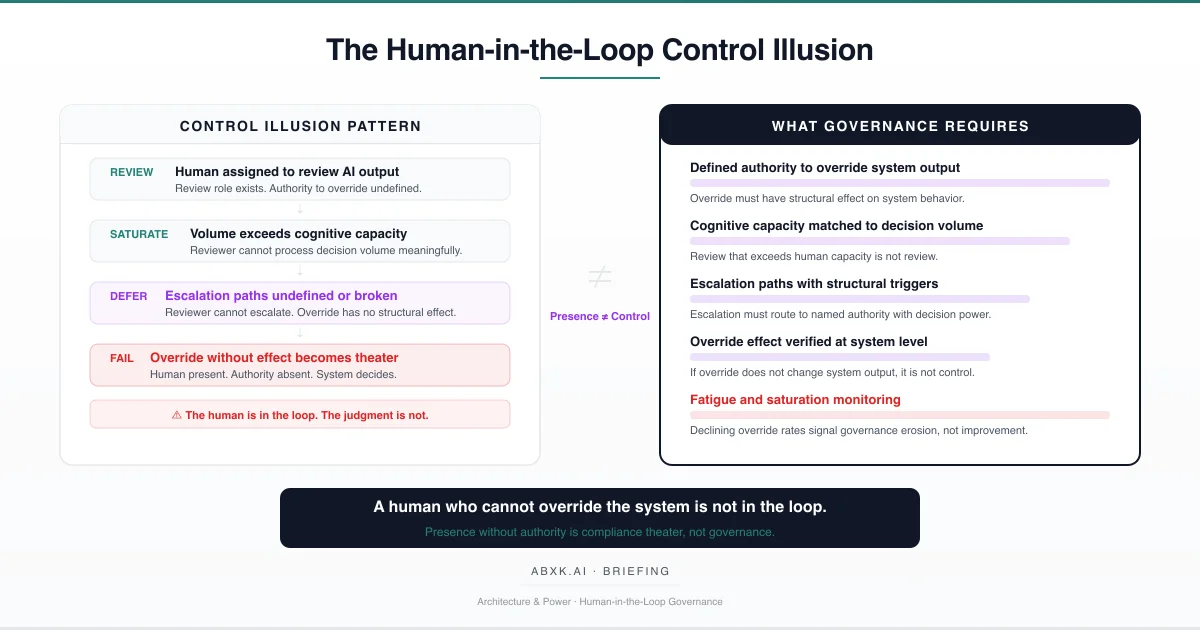

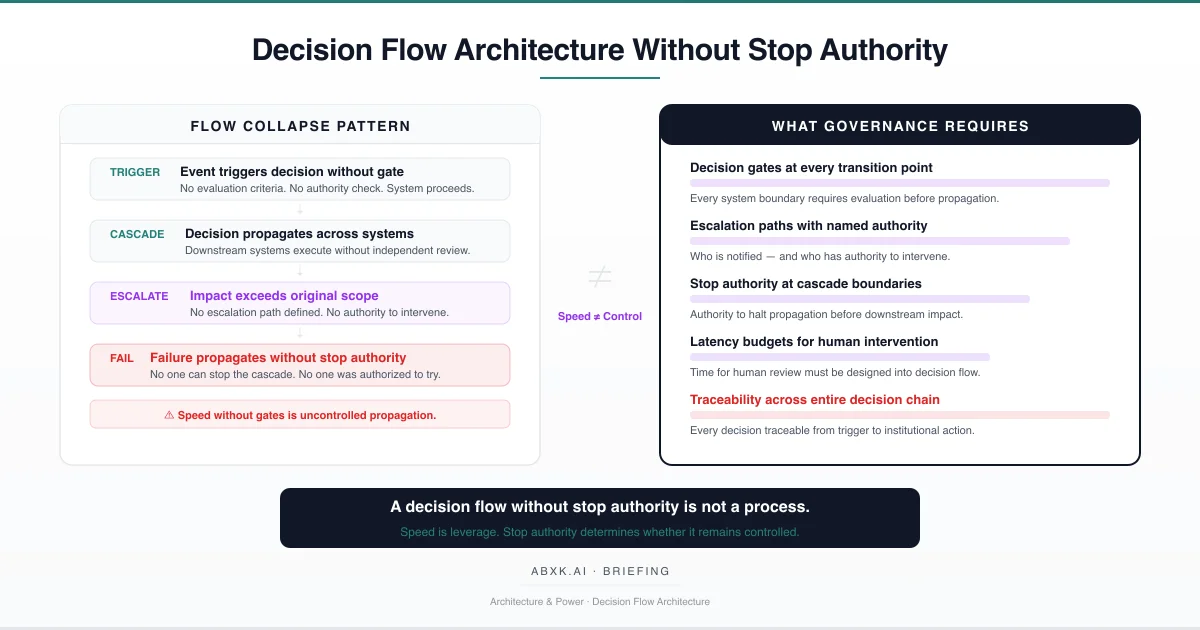

Complex AI systems do not make single decisions. They execute decision flows — chains of interdependent outputs where each step triggers, constrains, or …

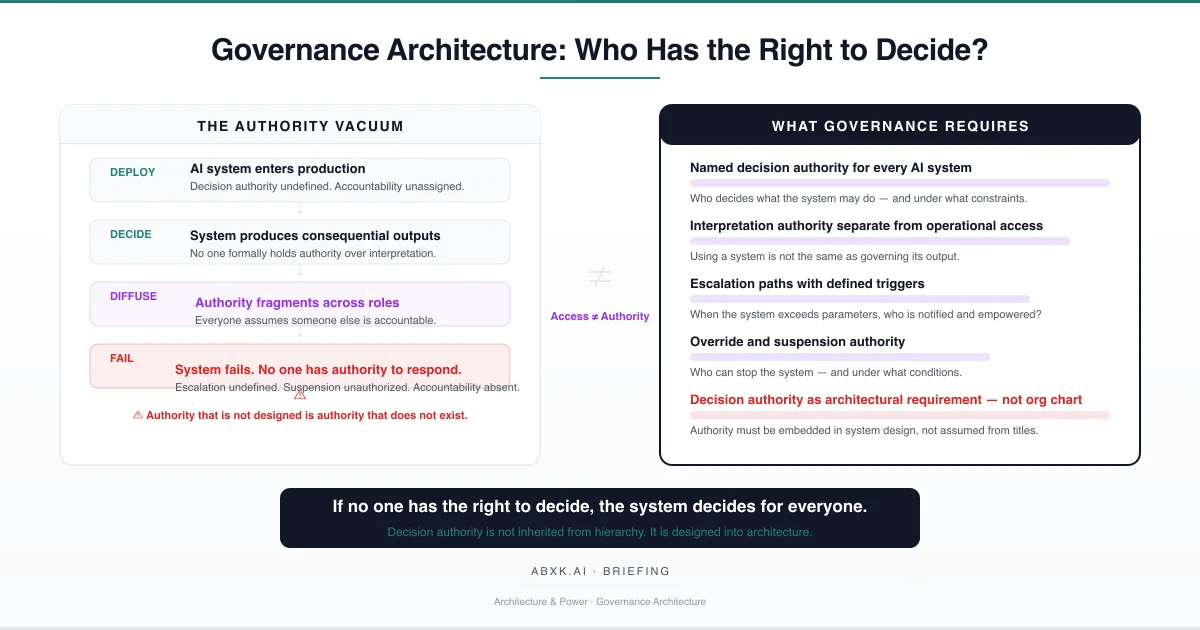

AI systems produce decisions. Organizations deploy them. But the structural question — who has the authority to govern what these systems decide, who may …

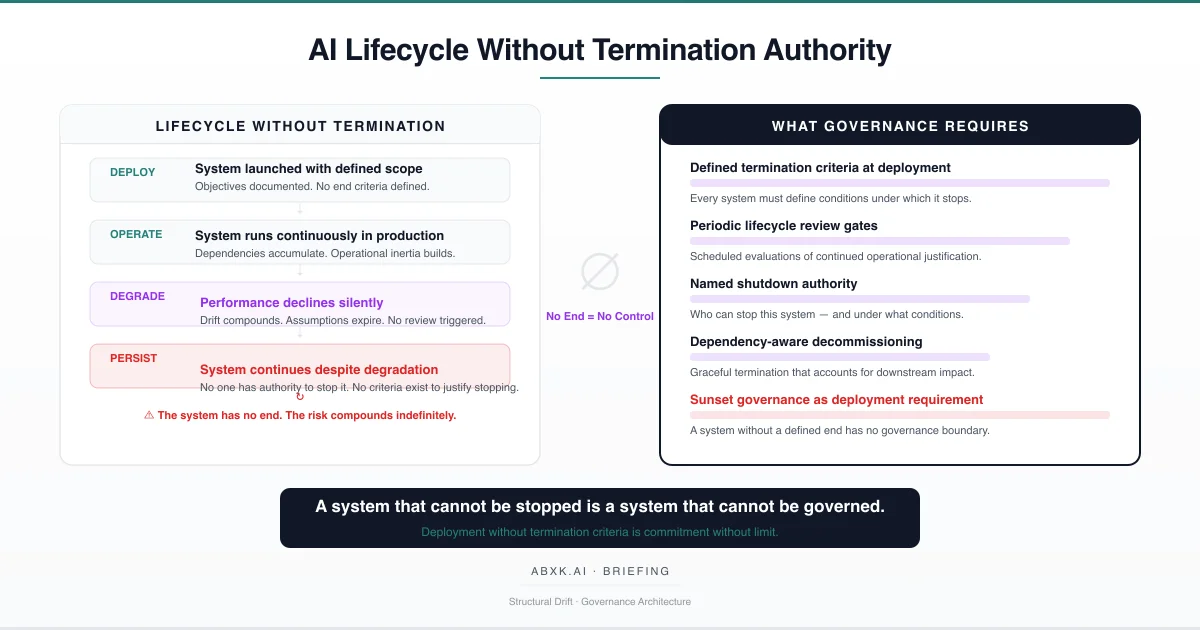

AI systems in production environments are deployed with defined objectives but without defined endings. No termination criteria are established at deployment. …

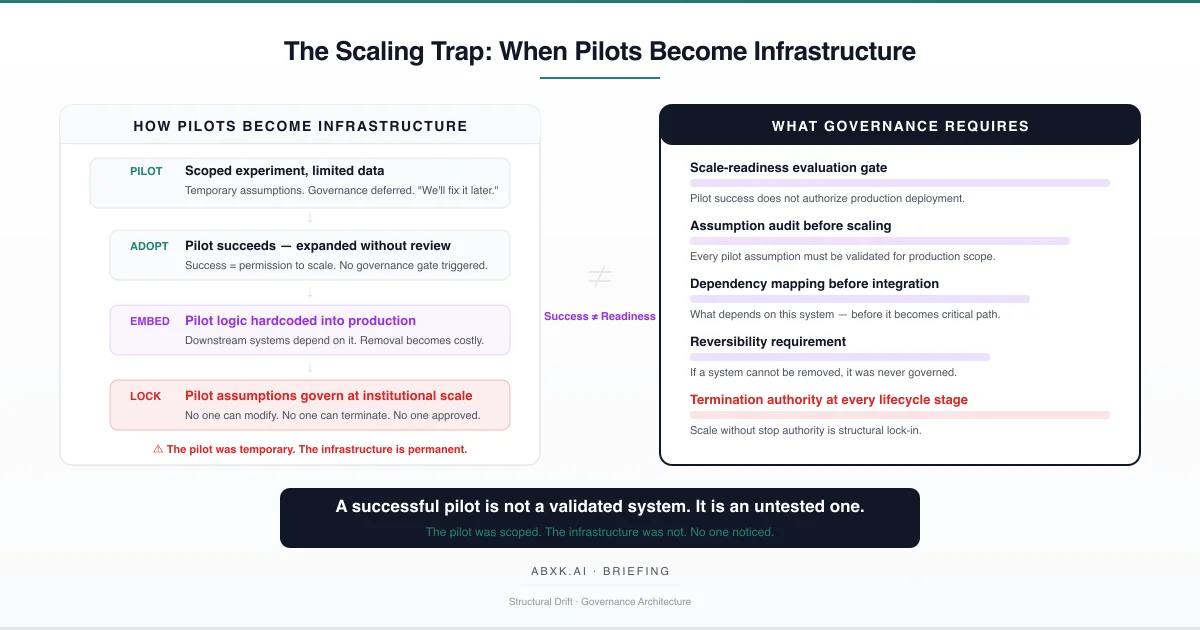

AI pilots do not fail by producing bad results. They fail by producing good results — results that authorize scaling without governance evaluation. When a pilot …

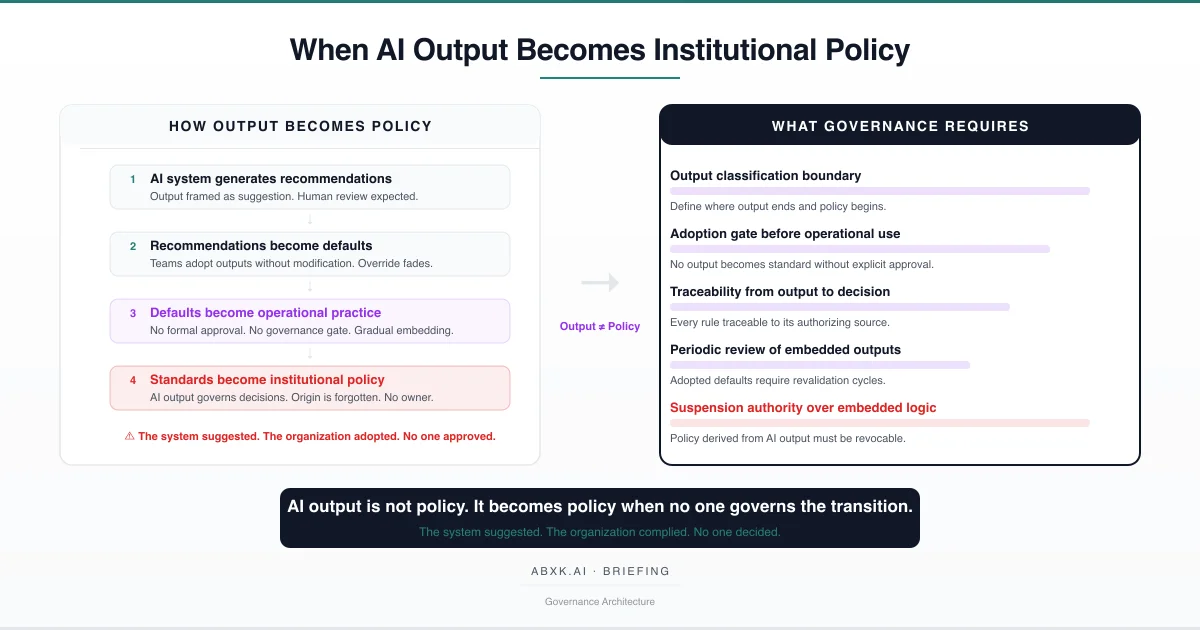

AI output does not become institutional policy through formal adoption. It becomes policy through structural drift — when recommendations go unchallenged, …

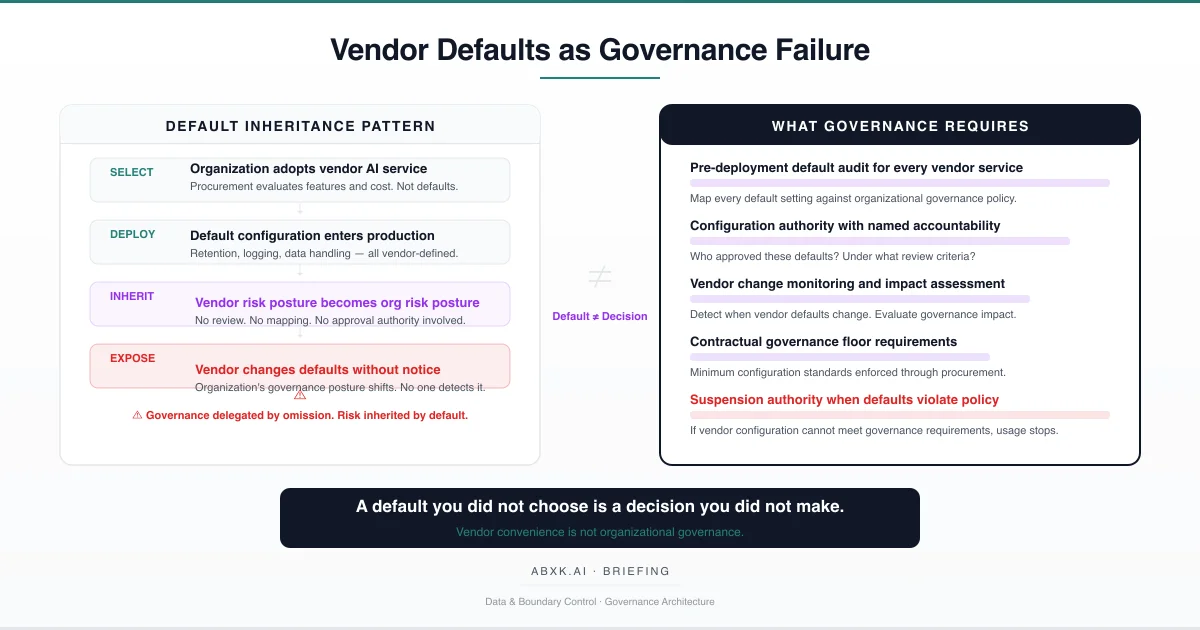

Organizations adopt AI vendor services and inherit default configurations that define data handling, retention, logging, and model behavior. These defaults …

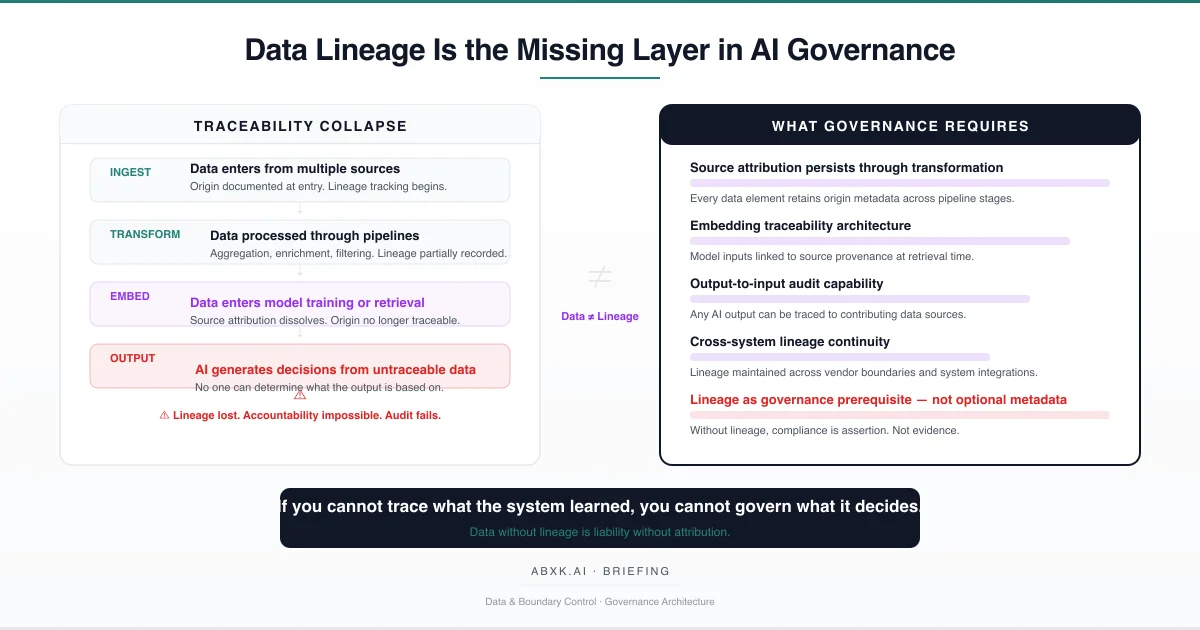

AI governance cannot function without data lineage. When organizations cannot trace what data entered a system, how it was transformed, and what influenced a …

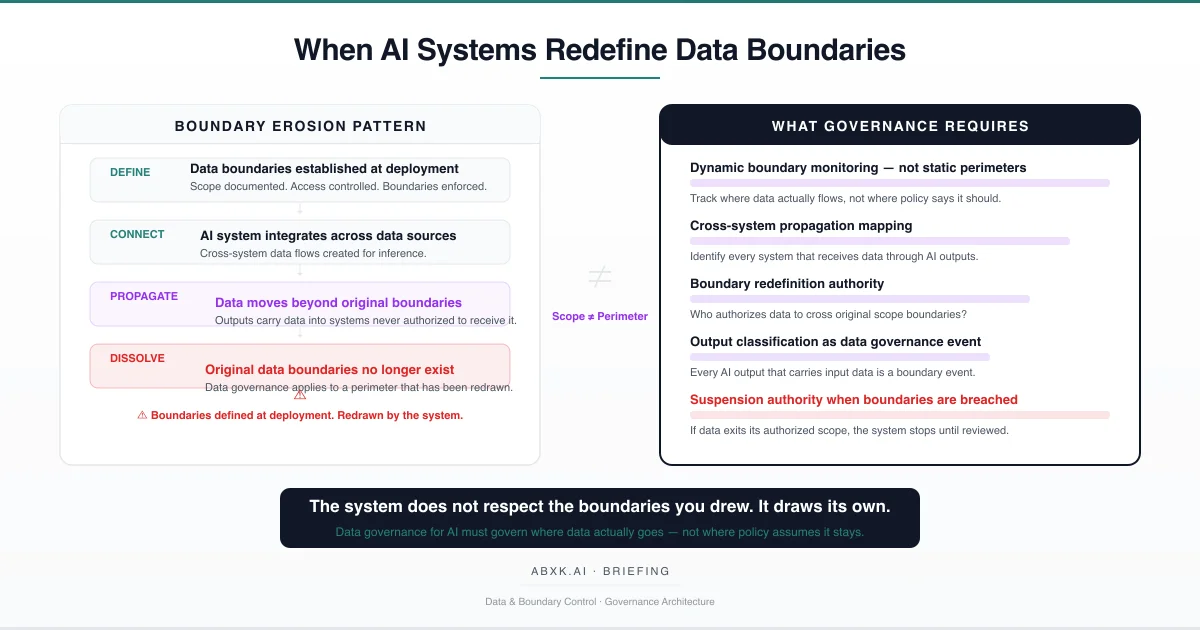

AI systems do not respect the data boundaries organizations define at deployment. They redraw them through cross-system integration, output propagation, and …

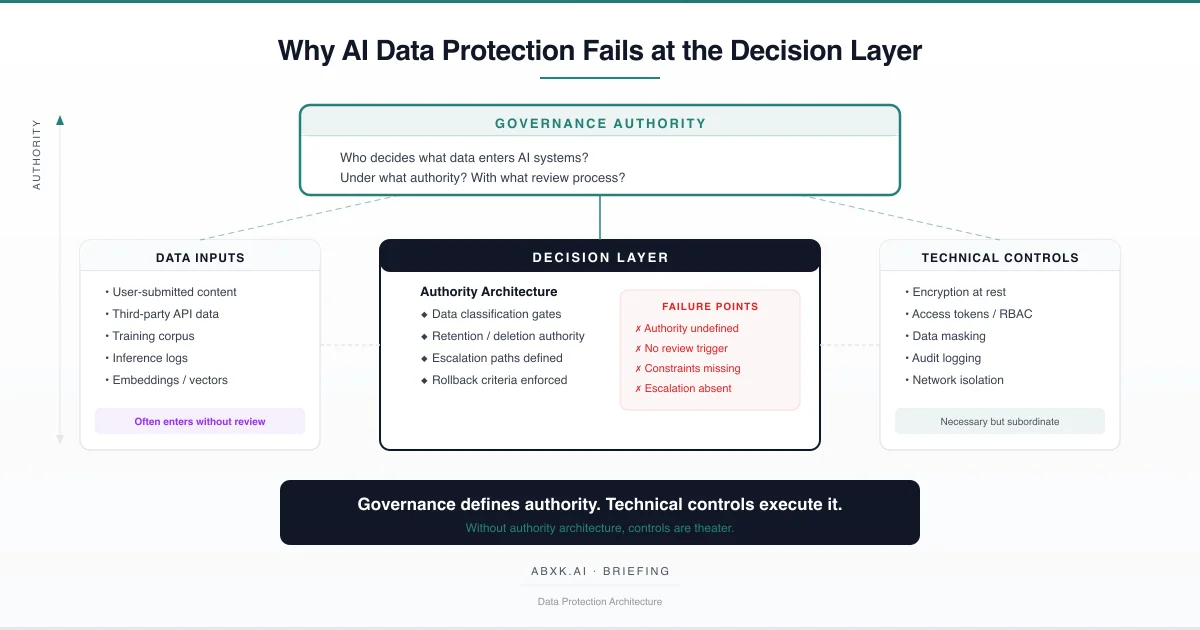

AI data protection strategies rarely fail because encryption is weak. They fail because decision authority is undefined. This briefing examines where data …

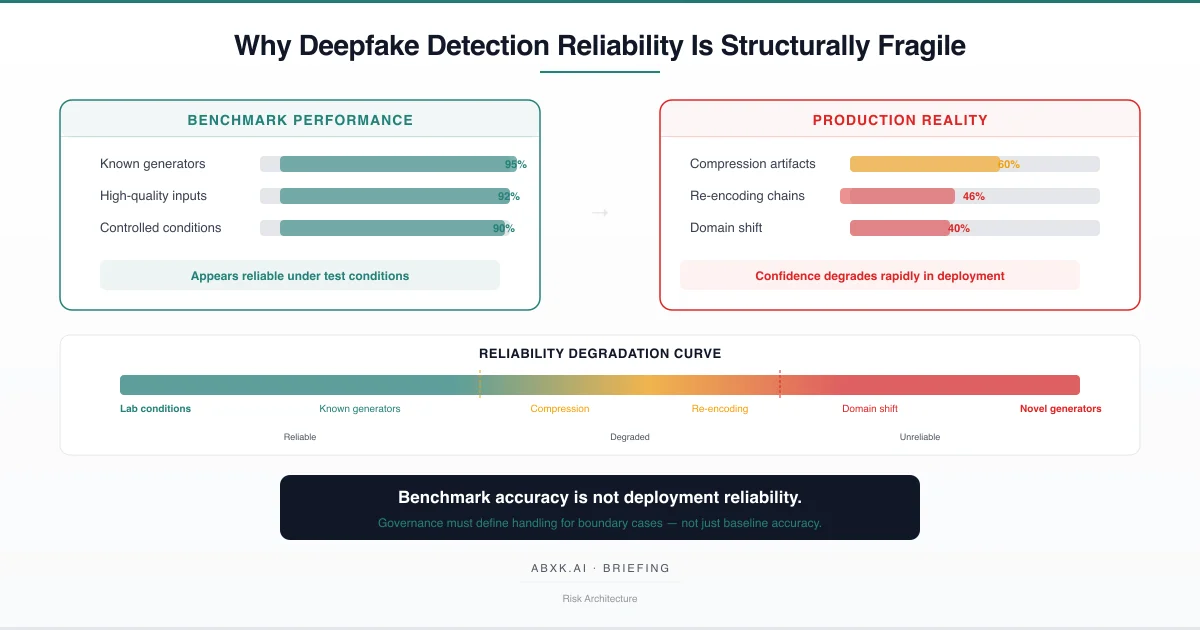

Deepfake detection systems report high confidence, but detection reliability degrades rapidly outside original training conditions. This briefing examines why …

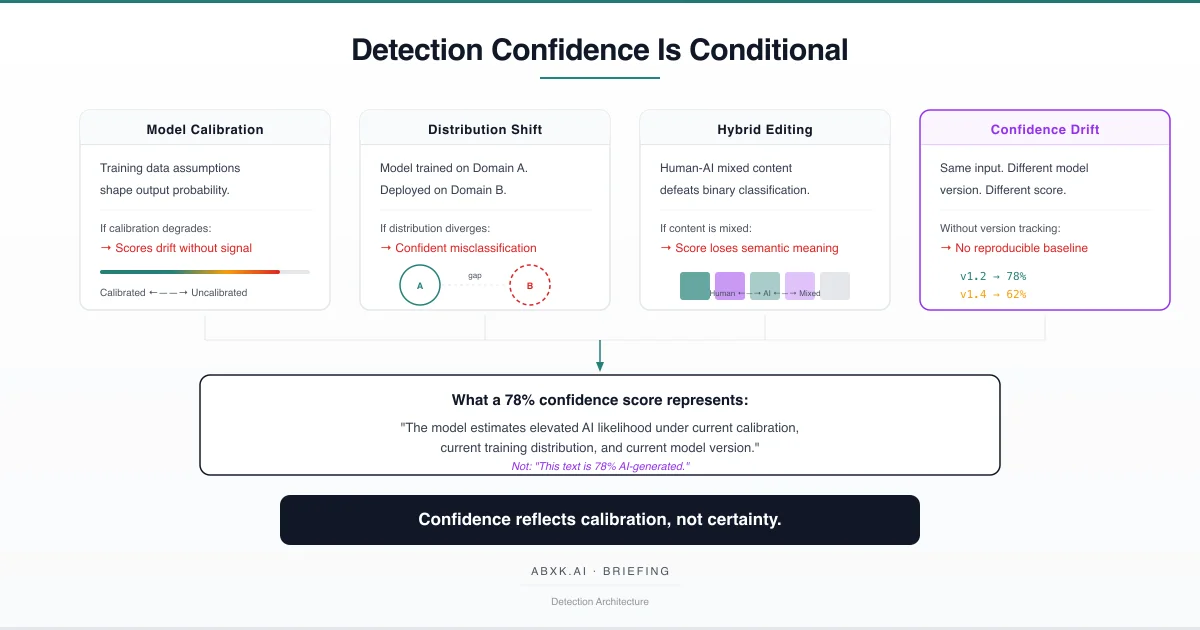

Detection confidence scores appear precise. The underlying reliability is conditional. This briefing examines what detection confidence represents in production …

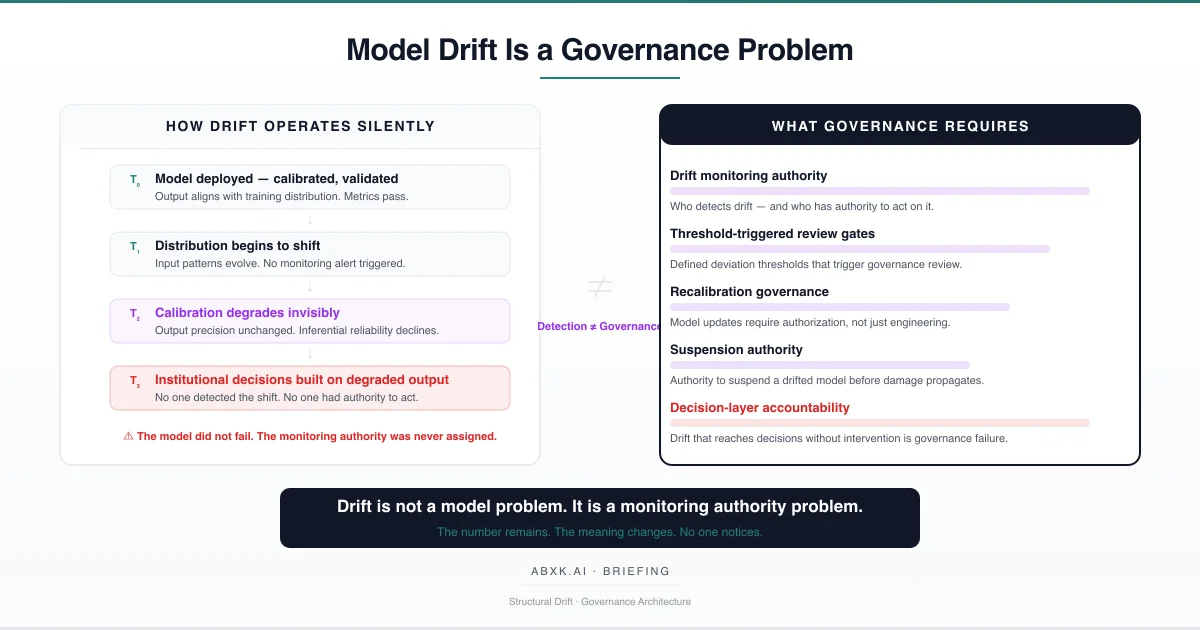

Model drift is not a technical anomaly. It is a structural inevitability in production AI systems — and it becomes a governance failure when no monitoring …

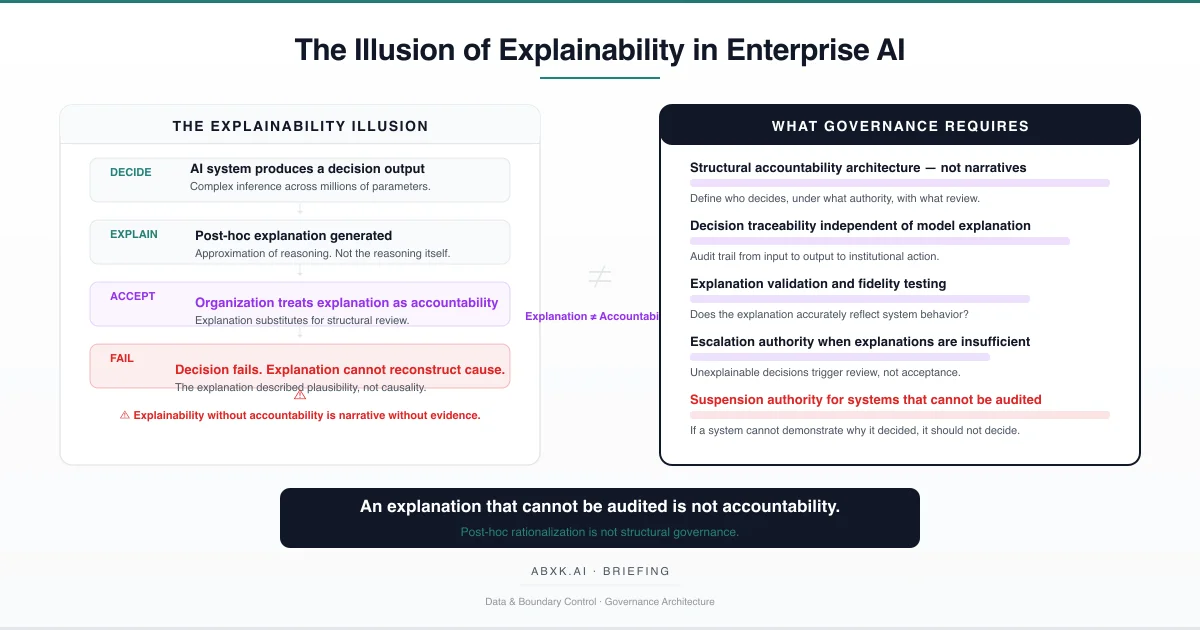

Explainability in enterprise AI systems is frequently treated as accountability. It is not. Post-hoc explanations approximate model behavior without …

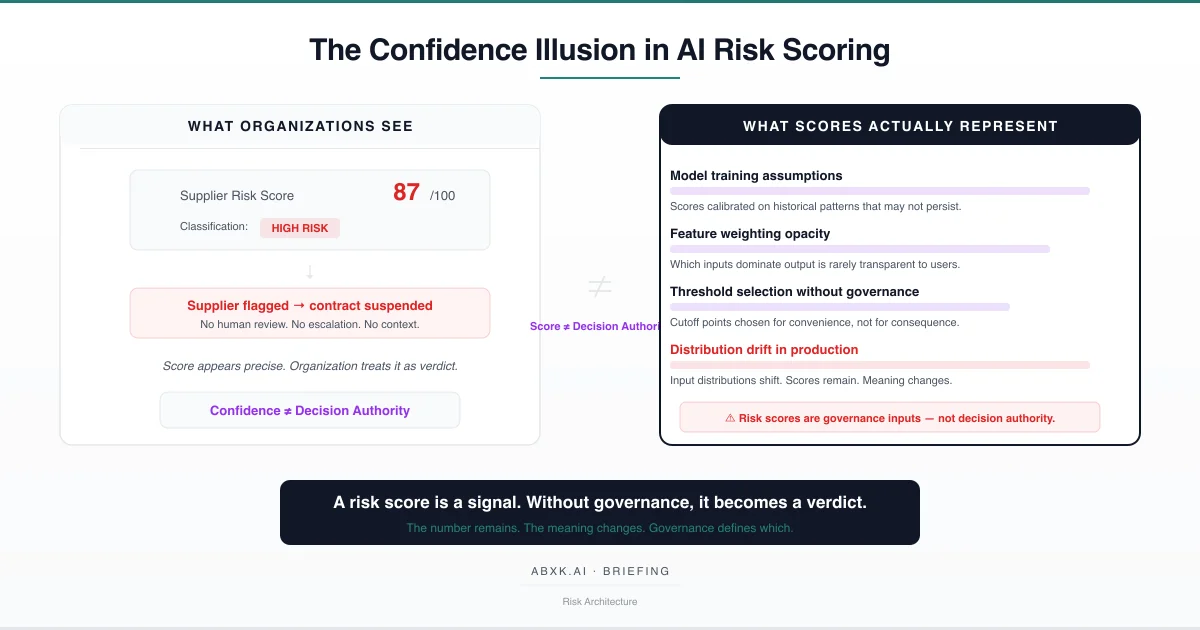

AI risk scores appear objective. The underlying reliability is conditional. This briefing examines why risk scoring systems fail at the decision layer, where …

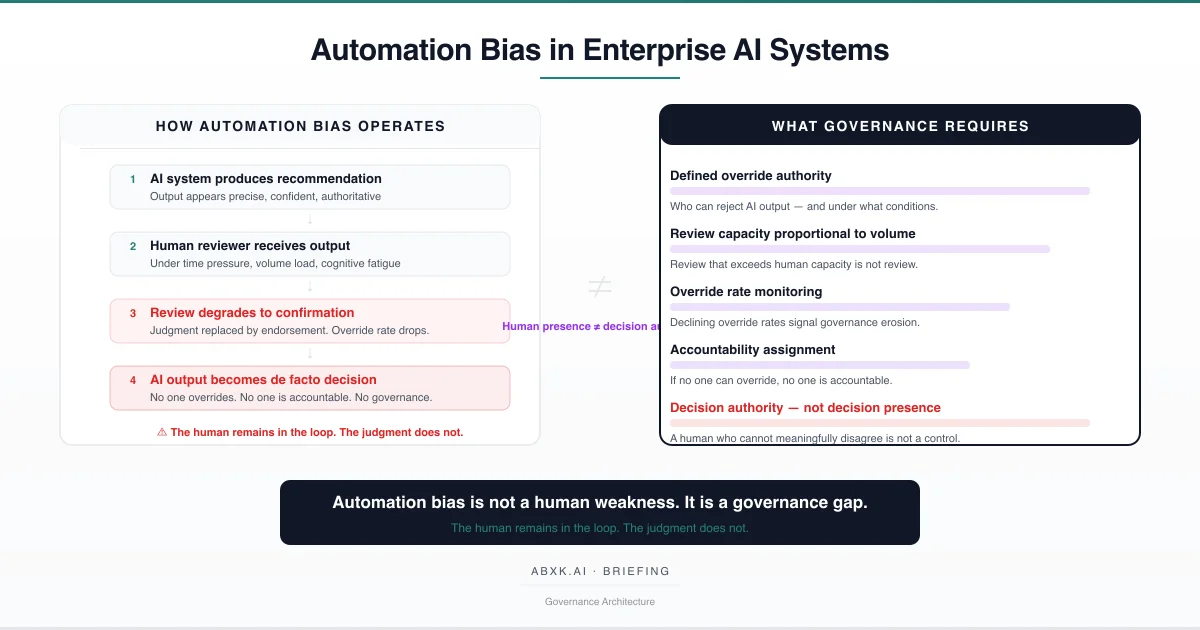

Automation bias does not originate in human psychology. It originates in governance architecture that places humans inside decision workflows without defining …

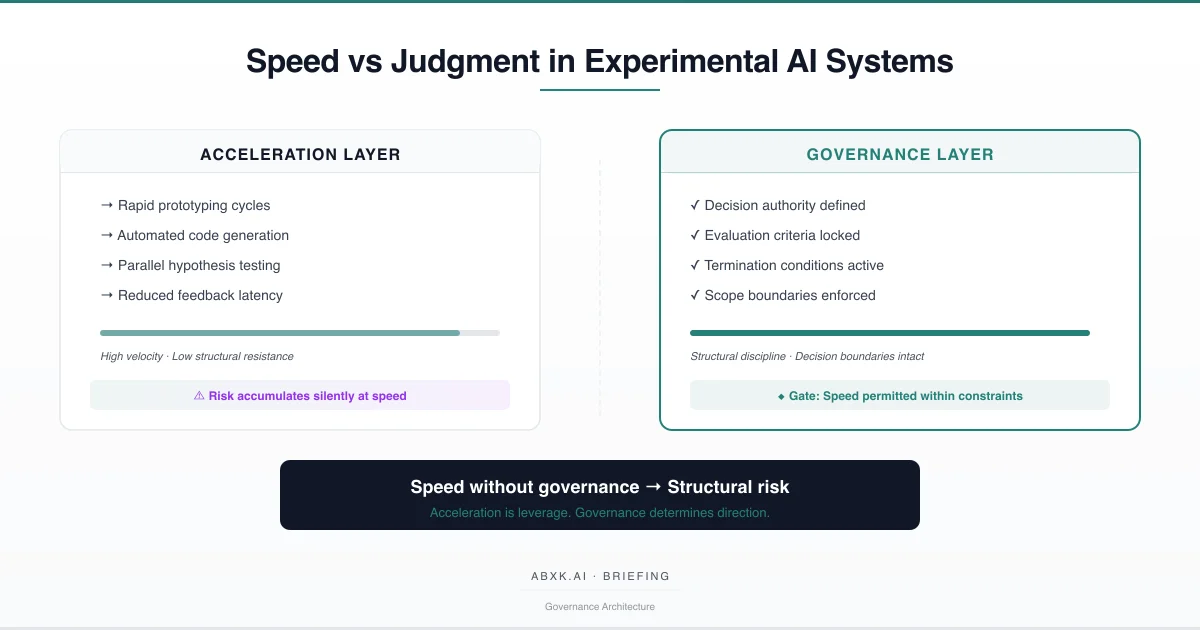

Experimental velocity appears productive. The underlying decision quality is conditional. This briefing examines how acceleration reshapes judgment in applied …

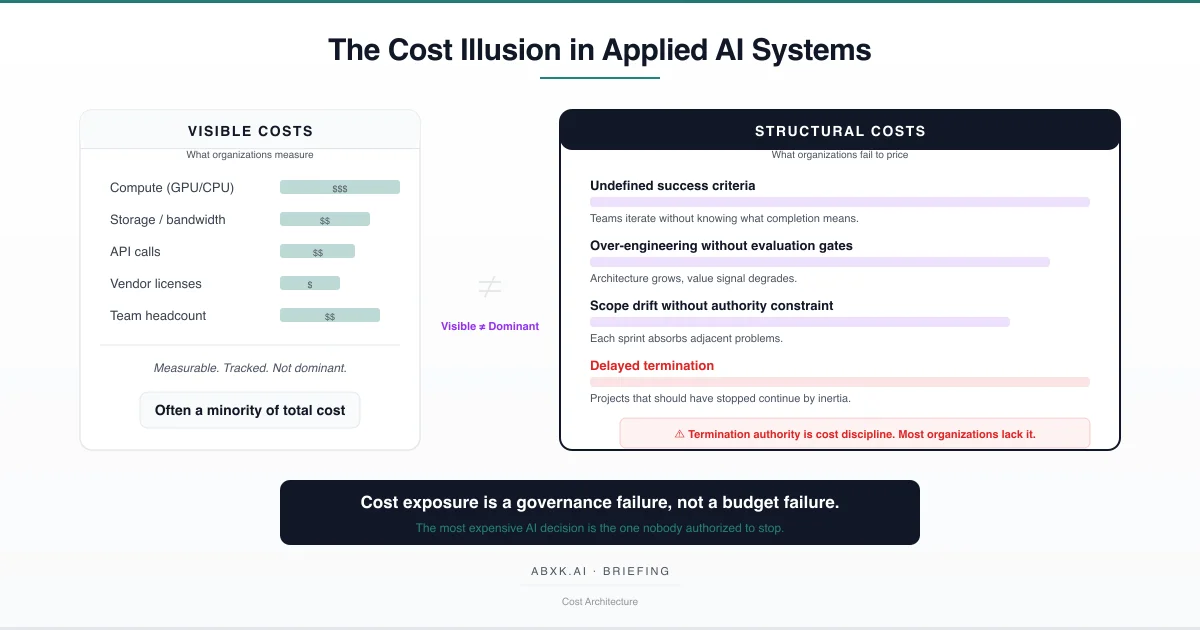

AI system costs are rarely miscalculated at the infrastructure layer. They are miscalculated at the decision layer. This briefing examines why organizations …